For all us frustrated licence payers, Beebhack aims to discuss what the BBC is afraid of mentioning; "This site is designed to be a resource for re-purposing on our own terms the BBC content that we, the licence payers have paid for."

VirusTotal (like Jotti's malware scanner) is a web-based virus scanner; "a service that analyzes suspicious files and facilitates the quick detection of viruses, worms, trojans, and all kinds of malware detected by antivirus engines." Simply, you upload a file through the web site, it passes your submission past ~30 commercial antivirus programs and reports the results back to you within two minutes. A remarkably simple way to check a file if you're feeling a bit suspicious. Curious? See a sample results page here (for a real virus file, in this case an MSN worm).

I know this has been around for a while, but it deserves some attention. Are you a Digg user? Do you want to visit a really popular site but it keeps on timing out or downloading really slowly? You could give the Coral CDN a go - in a nutshell, it's a free service anybody can use which lets you request web sites and files, all of which go through their own network of servers scattered around the world. The idea behind this is that at some point in their network, they're bound to have a machine geographcially closer to the target location than your machine is, so it will speed up transfers.

It does work too, particularly for heavily-trafficked sites. It's simple to activate, too: just add .nyud.net to the end of any web address (so google.com would become google.com.nyud.net). .nyud.net:8090 and .nyud.net:8080 also work sometimes when just using .nyud.net doesn't, so give those a go too.

Simple to use, faster transfers and free! Next time a site gets slashdotted or dugg heavily, give it a try. Alternatively, you could give the Planetlab/CoDeeN free academic network a go, although that involves one extra step (choosing one of their server IPs and configuring it as a proxy server in your browser). For a comprehensive list of Planetlab/CoDeeN public proxies, use Samair's Proxy Checker (just look for any proxy with Planetlab/CoDeeN next to it).

Oh, and lastly, if you're after decluttering your browser home page, give Symbaloo a try.

A recent discussion on Slashdot (and reported on BoingBoing) raises an interesting question: with the commercial world moving ever more towards conditional licencing agreements for games, music, and ebooks, is there going to come a flash point whereby consumers realise they're paying for nothing more than thin air? Or, will the powers that be see the necessity to force a change in law to ensure that people still get what they (think they) are paying for?

It's one of the reasons I've shied away from music rental services (that, and the horrible DRM too), but it's also a driving force behind me actively avoiding ebook and digital media purchases for things like films and TV shows. If we're paying the same as we'd pay for physical media, but we're getting less rights in return (and often, not even a permanent digital copy) why should we even bother paying?

It's definitely one of the factors for the MP3 format sticking around as long as it has (and I fully support open* formats like FLAC and MP3, but I would only hand over money for music in FLAC format at a push for reasons of quality). I'd most definitely never enter into a licencing agreement between myself and a publisher - books are one of those things you can savour, hold in your hand, leaf through the pages or enjoy at your own pace. And, when I'm done with it, and if I don't want it, I can sell it on - can you do that with an ebook? Not likely.

Well, not yet, anyway... But isn't the whole point of digital media that by its very nature, it removes the element of scarcity? Surely the death knell of the humble printed book isn't already ringing?

Can there ever be 'digital rights'? (and can industry ever expect to be able to 'manage' these rather nebulous 'consumer rights' when, arguably, they themselves don't have the right in the first place?)

From the Gizmodo article,

In the fine print that you "agree" to, Amazon and Sony say you just get a license to the e-books—you're not paying to own 'em, in spite of the use of the term "buy." Digital retailers say that the first sale doctrine—which would let you hawk your old Harry Potter hardcovers on eBay—no longer applies. Your license to read the book is unlimited, though—so even if Amazon or Sony changed technologies, dropped the biz or just got mad at you, they legally couldn't take away your purchases. Still, it's a license you can't sell.

But is this claim legal? Our Columbia friends suggest that just because Sony or Amazon call it a license, that doesn't make it so. "That's a factual question determined by courts," say our legal brainiacs. "Even if a publisher calls it a license, if the transaction actually looks more like a sale, users will retain their right to resell the copy." Score one for the home team.

There's a kicker, though: If a court ruled with you on that front, you still can't sell reproductions of your copy, an illegal act tantamount to Xeroxing your Harry Potters. You'd have to sell the physical media where the "original" download is stored—a hard drive or the actual Kindle or Sony Reader. Our guess is that it only gets more complicated from here. What happens when the file itself resides only on some $20-per-month Google storage locker?

There's a full commentary on BoingBoing (and a link to the parent Slashdot story via the Gizmodo article).

Tags: CC, consumer rights, copyleft, copyright, creative commons, digital rights, drm, ebook, itu, Kindle, rights

Update, 18/05/2008: YouTube have slightly revised their interface, and are also rolling out a new player... The short instructions to see high quality videos: add &fmt=18 to the end of any YouTube URL and hit Enter to reload the video.

You'll then notice that a link should appear underneath the player saying "watch this in standard quality" - if so, you're watching the higher quality video, and clicking it will show the lower quality version you'd normally receive. You don't want to do this ;)

Going into your User Preferences (click on Account at the top of the page once you've logged in), going to the bottom of the page, clicking on Video Playback Quality and choosing the "I have a fast connection. Always play higher-quality video when it's available" option will sometimes force this option. Again, this is somewhat at YouTube's whim whether you get the high quality video or not, and I've noticed my account has been reset to the "Choose my video quality dynamically based on the current connection speed" option a couple of times now.

The longer explanation, and a commentary (with quality comparison) continues below.

I noticed the other day that YouTube's started to quietly trial higher quality video playback for an increasing amount of their video content. For me this is a long-overdue, very welcome upgrade. Low quality video and 64kbps MP3 audio are fine for the 1990s, but we're in the 21st century now thank you very much. Finally, Google gets with the plan... And here's the quick-'n-dirty hack to get higher quality playback even if YouTube doesn't want you to have it right now.

A few words before we get to the juicy bit: the experience isn't perfect yet, but this is a comparatively trial (if annoying) issue for the time being. For videos 'ingested' (i.e. uploaded by users) into the YouTube backend that were encoded as native or anamorphic widescreen (16:9, like DVDs), the higher quality videos currently play back vertically stretched to a 4:3 aspect ratio, which I've concluded is due to the player not correctly handling the higher quality widescreen content. The most likely explanation is that it either doesn't respect the 'widescreen' flag in the video (if there is one) or it can't properly display widescreen videos that haven't been encoded in a letterboxed 4:3 aspect ratio.

(Haven't a clue what I'm on about? Along with the two links in the previous paragraph, this page has quite a nice visual side-by-side comparison of what anamorphically-encoded widescreen content looks like versus regular 4:3 video.)

I'm positive that the video aspect problem is a YouTube player problem because I downloaded the the actual .flv video file with a bit of creative Wiresharking, and played it in a standalone player (I use the excellent free FLV Player from Martijn de Visser). The video displayed correctly - and it both looks and sounds fantastic.

I could actually put up with YouTube videos if they were all this quality to start with! With a decent original quality source, the quality's roughly comparable to 350Mb TV episodes (or, if you're a UK web surfer, only slightly lower quality than the BBC's iPlayer streaming videos).

I've noticed this problem with the YouTube player more than once, including on content I've encoded and uploaded to YouTube myself (and I've filed a bug report), so hopefully this will be sorted out when the higher quality video is rolled out to more of YouTube's users. They can't leave YouTube with a broken player, and I doubt they'd reencode all of the widescreen video...

Anyway, on to the most important bit! Because the "watch this video in higher quality" links aren't always visible to everybody, I thought I'd point out that there's an easy way to force the higher quality stream every time you visit a page - just append "&fmt=18" (without the inverted commas) to the URL, and hit Enter to reload the page. (Yes, I know the 'hit Enter' part shouldn't need mentioning, but there are still some people who press the Go button with their mouse, my mum amongst them!)

If you're not too familiar with URLs, it's not hard - if you're passed a YouTube URL like http://uk.youtube.com/watch?v=wwJa1fftrr0, just add &fmt=18 to the URL so it now reads as http://uk.youtube.com/watch?v=wwJa1fftrr0&fmt=18... When you load that page, it'll show the video in higher quality (and show a "Watch this video in lower quality for faster playback." link underneath the video player). If the URL looks longer or has other crap on it (like referring URL info or looks a bit like http://www.youtube.com/watch?v=bxvxSwUecWY#qIZOG5m5PCM you can either delete all the stuff after the initial video ID (the alphanumeric characters straight after "?v="), or you can add &fmt=18 to the very end of the URL.

Wired also have a few other methods you can employ to always get higher quality playback, including a couple of methods you might have overlooked.

What should be immediately noticeable is the audio quality, but videos with a lot of motion will benefit too. If the video doesn't load, the higher quality video might not be encoded yet, or there might be a backend problem... All I can suggest is that you try again later, this feature isn't even being advertised yet so what do you expect? ;)

For the techies, the "watch this video in lower quality for faster playback" link both points to the URL with the &fmt=18 appended, and also toggles a JavaScript variable:onclick="changeVideoQuality(yt.VideoQualityConstants.LOW); return false;"

I've not checked, but I guess from the above that when you toggle the low quality video, the link's updated to update the value to 'VideoQualityConstants.HIGH'.

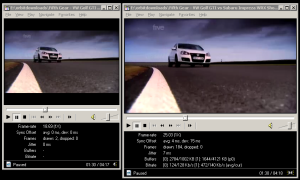

A word on bandwidth consumption... I'm not worried about Google's side of the deal, I'm sure they have ample bandwidth to cope with the higher quality - it's the end users who might get a bit of a shock when their next bill arrives from their ISP if they watch every video in high quality. As a guideline, watching high-quality videos on YouTube roughly doubles the amount of data downloaded for each video, so if you're on a limited-bandwidth broadband package (whyyyyy?!) then be mindful of the higher tariff this imposes on your limited available bandwidth. For the link I used above, a Fifth Gear clip called "VW Golf GTI vs Subaru Impreza WRX Shootout", the low quality video is 9.6Mb, and the higher quality video comes in at 19.3Mb. The video resolution is 480x264 pixels, encoded in H.264 (with an 'avc1' codec ID). The audio is AAC ('mp4a' codec ID). Here's how Media Player Classic reports it:

Audio: AAC 44100Hz stereo 125Kbps [(C) 2007 Google Inc. v06.24.2007.]

Video: MPEG4 Video (H264) 480x264 [(C) 2007 Google Inc. v06.24.2007.]

The average video bitrate clocks in at anything between 400kbps and 1mbps, and that's not including the ~128kbps for the VBR audio. Yes, I said 128kbps audio at 44.1kHz! ("CD" quality on YouTube, finally!) The peak quality of the video is 977kbps, so we're dealing with much better raw quality. The higher quality video also has a 25fps framerate, not the 10-18fps of the original quality video. $deities be praised!

For comparison, here are the statistics for the original, low quality version of the same video:

Audio: MPEG Audio Layer 3 22050Hz mono [Audio]

Video: Flash Video 1 320x240 [Video]

Unlike the high quality video (which plays back in its original 16:9 aspect ratio), the original low quality video is also encoded as letterboxed 4:3. This is a far a more inefficient use of bandwidth, and was obviously a kludgey workaround YouTube decided upon for early versions of the video player. This has had the unfortunate side effect of being incredibly annoying for people who have widescreen monitors, as you get a picture frame effect around all videos that have an original 16:9 AR. I can't wait for the day when they fix the video player and make it correctly display widescreen videos in a widescreen aspect ratio!

Finally, watching YouTube videos through your Wii is going to be a more enjoyable experience. Enjoy :)

Google: "I spy, with my little eye, something beginning with N..."

0 comments at Wednesday, March 12, 2008... And that N is Nanaimo. Google's been busy; its latest venture has been the analysis, assimilation and compilation of just about all public data for a town in British Columbia, Nanaimo. No doubt this was part of their Master Plan all along (what happened to Don't Be Evil?), but it seems that nothing is beyond their reach these days.

For example, want to know where the fire engines are, and how many calls there have been today, this week or this month? Just click through to their web site for realtime statistics. Want street-level imagery of the entire town? Fire up Google Earth and there you go. And if that made you go 'wow', that's not the only stuff they can show you;

"With Nanaimo, they have mapped nearly every conceivable thing using Google Earth and Google Maps," Michael Jones, Google Earth's chief technology officer, said last August at a conference in Vancouver. "Their citizens have more information about their city than the people of San Francisco."

All hail the all-seeing-Google! Google knows all! For a while now, Street View vans have been making their way through our towns and cities (my housemate recently showed me his street level journey into Las Vegas, from hotel to convention centre, after returning from this year's CES. It wasn't just aerial view, it was street by street and junction by junction, at ground level, in 3D - a very odd experience). At the same time, businesses are moving their email and work-related info over wholesale to Google-based platforms, plus we have the millions of individuals using Google by default as their launchpad into the Web - no doubt the big G's getting some very nice statistics to refine their advertising algorithms with. Does anybody else find it a little disconcerting that there's a select few companies who are getting the lion's share of our personal and public data, aggregating it and then doing some deep analysis on it to glean all they can from it? Where does it stop? Will Google or

The retention, analysis and resale of personal data is a hot topic at the moment, so it seems oddly appropriate that I be discussing an entire town's data being collected and analysed by the world's largest search engine (and one of the world's largest holders of 'anonymous' web usage statistics?) It's particularly prescient given the recent debate and discussion surrounding the introduction of Phorm to several UK ISPs (and one ISP, TalkTalk, announcing that they'd changed their gameplan and are were going to only introduce Phorm on an opt-in basis, as opposed to a blanket opt-in with a partial opt-out).

Presently, I don't think the majority of consumers understand or care about the extent to which they're profiled on the web, largely because they either consider it to be unrealistic (but the future is today!) or they haven't been educated to the benefits and dangers correctly of what these kinds of ventures could entail.

Either way, for me, it's partly the ethics and partly the fact that it's a 'foot in the door' for just about anybody, public sector or Government, to ramp up the amount by which citizens are monitored, profiled, maybe even targeted for surveillance... Are we, as citizens, unwittingly handing over some of our crucial rights as individuals to the great cloud in the sky, only to realise too late we've handed over some of the very things that grant us privacy and peace of mind in our own lives?

Tags: Big Brother, DPA, freedom, google, individual, itu, laws, nanaimo, phorm, privacy, RIPA, security, the all-seeing eye

Image credit: Future NowGoogle's much-vaunted mantra has always been 'Don't Be Evil' - yet many don't realise that they've been bending the rules for a fair while now. Ever typed in a domain name, got it slightly wrong (a simple typo) or put a hyphen in where it doesn't need one? (for example, typing verycool-products.com instead of verycoolproducts.org)... I know I have, and sometimes you get those annoying pages of adverts which try to fool you into thinking that they're somehow related to the official site you're looking for. In no uncertain terms, this is arguably cybersquatting, but unscrupulous companies who engage in this prefer to call the practice "domain portfolio monetisation" - the long and short of it is that they most likely own many hundreds of domain names (tens of thousands in the largest portfolios), on which they plonk pages full of adverts.

Google, arguably the world's largest pay-per-click advertiser, is complicit in this through their Google AdSense for Domains program. Here's how they defend their 'service':

What is AdSense for domains?

AdSense® for domains allows domain name registrars and large domain name holders to unlock the value in their parked page inventory. AdSense for domains delivers targeted, conceptually related advertisements to parked domain pages by using Google’s semantic technology to analyze and understand the meaning of the domain names. Our program uses ads from the Google AdWords™ network, which is comprised of thousands of advertisers worldwide and is growing larger everyday. Google AdSense for domains targets web sites in over 25 languages, and has fully localized segmentation technology in over 10 languages.

So Google, if you plan on not being evil, when are you going to stop your less-than-savoury profitable endeavours such as this? Nobody likes being greeted with a page full of ads if they make a simple typing mistake (some of which occasionally try to hijack your browser or launch new popup windows), and they also cause a big technical problem for web coders and programmers who expect a standard DNS error message if an invalid domain is submitted.

People have to be really stupid (or desperate) if they click on obvious adverts on a parked web page - they usually bear no relevance whatsoever to any search terms you might have been looking for, and if you come to them blind (without being referred through a search engine's results page) you often haven't a clue what's going on. In historical terms, it can cause a real problem too, if you have old domain names printed on your literature or publications, what happens if this domain name gets sniped around renewal time and you can't get it back?

Going back to the issue of DNS errors instead of technically-valid junk pages - what's the problem with this? Well, say you're coding a web platform which accepts valid domain names as an input, which it then parses to check whether they're valid or not - with parked domains displaying pages full of adverts, the site might be tricked into thinking that it's a valid site (instead of an invalid, parked domain), so this can cause programmers real headaches.

Verisign, one of the US' largest domain registrars, tried to sneak in its own brand of typo hijacking with its Site Finder system - something they quickly shuttered (to the benefit of both regular web users and network administrators alike). (More on Site Finder at Wikipedia and via what Google has to say about it... The irony's not lost on me).

Another problem with parked domain advertising is that it ties up many thousands of domains that would otherwise be available for people who might want to register them (especially those who have a valid use for them) - I'm currently trying to sort out a hijacked domain for a company I work for (it's a variation of their company name) and it's a bloody nightmare. ICANN fees are ludicrous ($1500 to file a domain name arbitration!) and there's not even a certainty you'll get the domain back, as the burden of proof is on you. Bad Faith usage on the part of the defendant certainly helps your case, but it's potentially a big investment with no return, and that's what squatters rely on.

With the situation as it is now, with Google and several other companies providing parked domain advertising for unscrupulous owners of very large portfolios of parked domains, it muddies the water for the rest of us and is most certainly not what the web is about. For shame, Google! It seems that their mantra of 'don't be evil' is fast becoming 'don't be ostensibly evil', especially given the company's past involvement with censorship in China and the previous debates about retention of surfers' search data...

...Maybe it's time to look for a new search engine? I still rely on Google for day-to-day activities, but I'm trying to change my habits; I already use the SSL version of Scroogle for anonymous Googling.